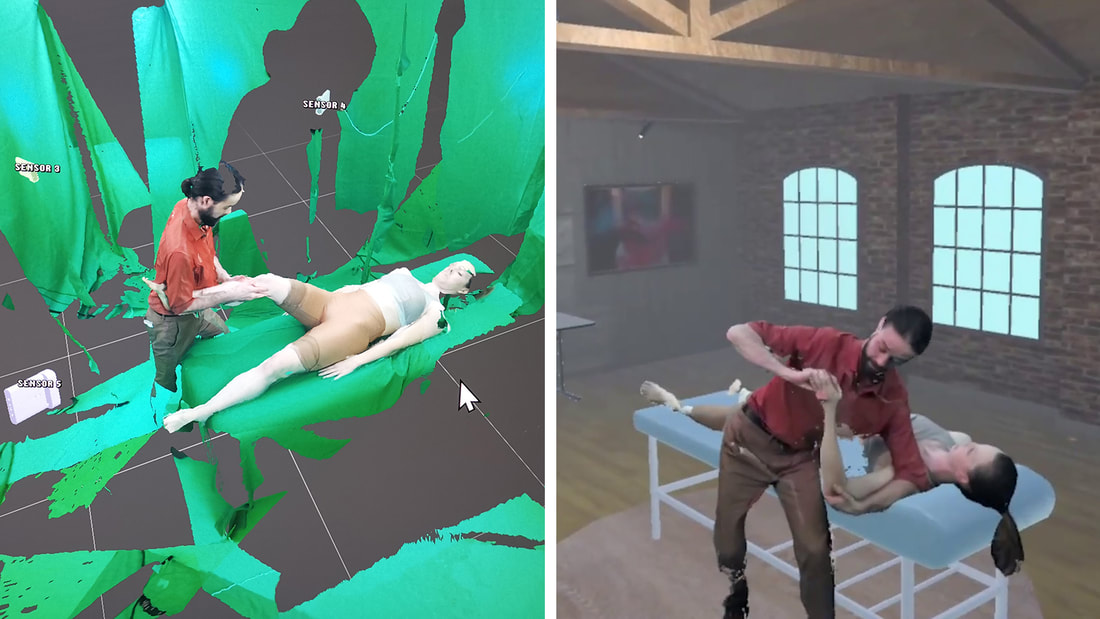

Physiotherapy Hand Skill Training Using Volumetric VideoThis research addresses the pressing need for improved online education methods, particularly for hands-on skill training in physiotherapy education. We propose a novel learning tool that leverages volumetric capture and virtual reality to supplement conventional teaching methods. This system offers a three-dimensional perspective, providing a more engaging learning experience than traditional two-dimensional mediums. This immersive education approach will significantly enhance the quality of physiotherapy education and stimulate innovation within the institution.

|

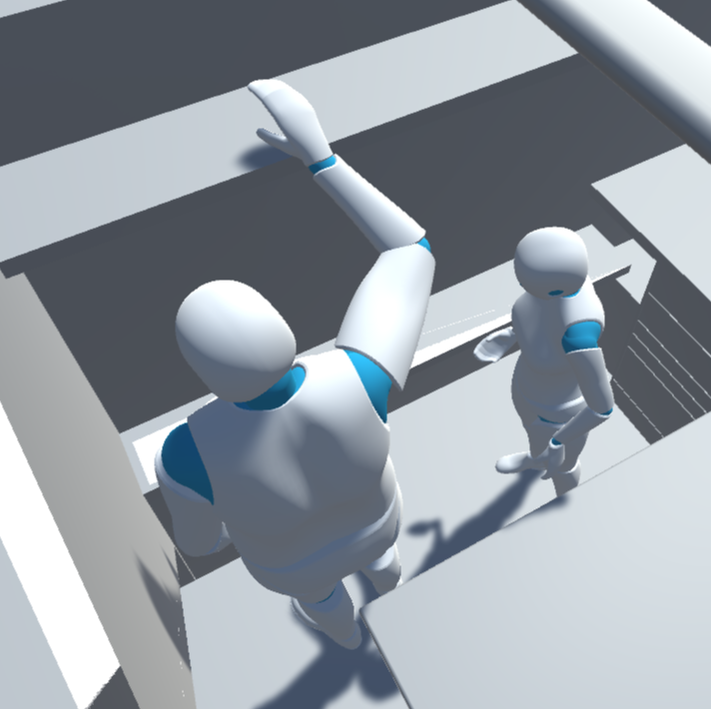

Evaluating Ergonomics as a Digital Human Model

Digital Human Models are model that represents the size and movement of a human. These are widely used when evaluating the ergonomics of physical spaces during the design process. In partnership with Kadego-Cadgile, we are exploring the possibility to embody a Digital Human Model and experience initial virtual designs. Allowing a designer to understand and interact with the environment they are designing as if they are the same size as the shortest, or tallest person, and anyone between.

|

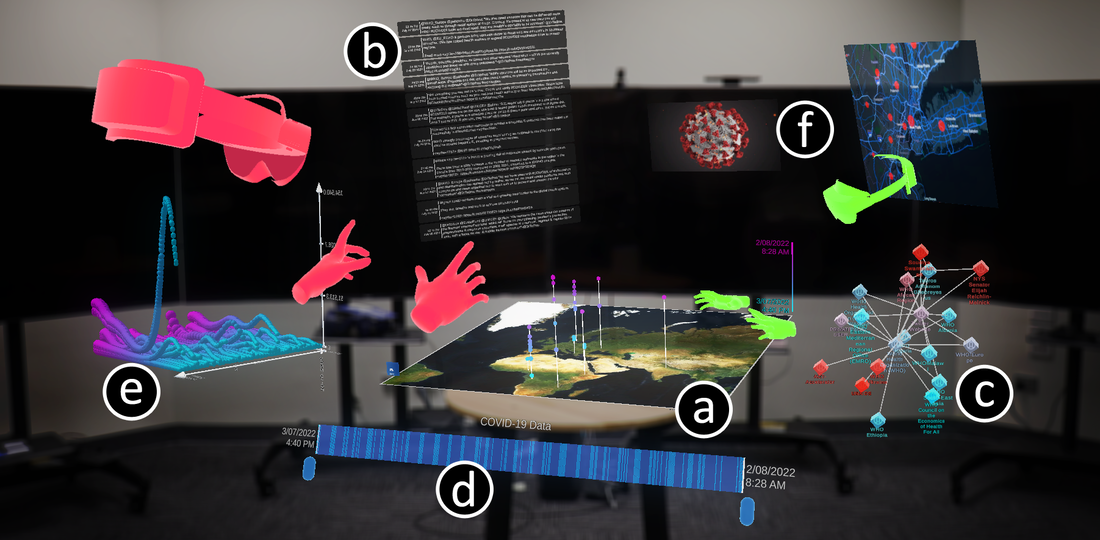

CADET: Collaborative Agile Data Exploration Toolkit

CADET is a collaborative toolkit system for immersive data analytics and sense making. Users are able to visualize and explore data collaboratively with local users and remote users across the world to produce a shared understanding. The underlying collaborative interaction and avatar framework built on Photon is available open source from our GitHub!

|

Parametric VR: An Interactive Virtual Reality System for Parametric Design

This project aims to create a new and intuitive set of user interactions for Virtual Reality (VR) to support parametric designers in architecture and design. Parametric tools are an emerging design technology dominating contemporary practices, yet their interfaces are on traditional desktop computers while VR is only employed to visualise the geometric models produced by the end design. This project will generate Parametric VR, a system of VR tools to support parametric design. Key outcomes include software tools and demonstrators to support parametric algorithms and processes in VR. This will have significant benefits for design industries, allowing designers to directly edit parametric design entirely in VR across the project lifecycle. This an ARC Discovery Grant funded project.

Hand Skill Training with Custom Haptics and Real-time visual feedback

Custom haptic devices can enable unsupervised hands-on training and teaching of complex tasks. Existing virtual educational tools for science learning are effective in teaching theory but do not allow for hand dexterity training of necessary skills required in a laboratory. This research project examines authentic ways to improve hand skill performance in unsupervised, distance learning situations.

Techniques and Guidelines for Immersive Data Storytelling

Developments in immersive technologies has led to the emergence of immersive analytics. Immersive analytics takes data visualisation beyond the traditional 2D displays. At the same time, data storytelling has become a prevalent method for communicating data. Combining both data storytelling and immersive analytics gives rise to “immersive data storytelling”. Immersive data storytelling refers to telling visually compelling data stories in an immersive environment such as virtual reality, augmented reality, mixed reality and 360 videos.

Currently, immersive data storytelling is underexplored from an academic standpoint. The research project aims to develop techniques and guidelines for effective data storytelling in various immersive environments by identifying design patterns from a multidisciplinary perspective, leveraging techniques from disciplines that have a pedigree in designing physical and virtual spaces, including architecture, museology, and game development.

Currently, immersive data storytelling is underexplored from an academic standpoint. The research project aims to develop techniques and guidelines for effective data storytelling in various immersive environments by identifying design patterns from a multidisciplinary perspective, leveraging techniques from disciplines that have a pedigree in designing physical and virtual spaces, including architecture, museology, and game development.

Visualising Multi-dimensional Data in Forestry

Immersive visualisation tools are capable of displaying complex data that would be otherwise impossible to properly analyse using 2D methods. Such tools have the potential to be integrated with data systems in forest management operations. Immersive visualisation tools can facilitate the presentation and analysis of spatial and abstract tree information, as well as allowing forest planners to engage with multidimensional data.

Capturing a snapshot of trees in a moment in time through LiDAR scanning, storing a virtual representation of them in a forest digital twin, and exploring the data through immersive analytics could enable analysis and operation planning on a level previously unprecedented in forestry. Immersive visualisation of forestry data is an area of research still in its infancy, and to the authors knowledge no exploration of multidimensional data in immersive analytics has been conducted to date.

This project is a collaboration between the University of South Australia Interactive and Virtual Environments Lab (IVE) and OneFortyOne Plantations. The project aims to explore development of a prototype data pipeline to support interactive analysis with a forest digital twin, visualisation of spatial tree information from forestry systems, visualisation and analysis of multidimensional data, and interactive visualisation of tree selection algorithms.

Capturing a snapshot of trees in a moment in time through LiDAR scanning, storing a virtual representation of them in a forest digital twin, and exploring the data through immersive analytics could enable analysis and operation planning on a level previously unprecedented in forestry. Immersive visualisation of forestry data is an area of research still in its infancy, and to the authors knowledge no exploration of multidimensional data in immersive analytics has been conducted to date.

This project is a collaboration between the University of South Australia Interactive and Virtual Environments Lab (IVE) and OneFortyOne Plantations. The project aims to explore development of a prototype data pipeline to support interactive analysis with a forest digital twin, visualisation of spatial tree information from forestry systems, visualisation and analysis of multidimensional data, and interactive visualisation of tree selection algorithms.

Hybrid World Building in Spatial Augmented Reality

Spatial Augmented Reality (SAR) or projection-based AR is a useful approach for large space design assistance due to its wider field of view and the absence of bulky wearable gears. However, SAR has limitations such as occlusion, dependence on surfaces, and lighting conditions. The objective of this project is to use SAR to assist with space planning and spatial design. Users use cardboard boxes to construct elements that fill the targeted space, which can be augmented with a digital counterpart in AR. The research will focus on improving user interfaces and experiences in the world-building system, incorporating elicitation study, previous SAR design investigations, and Anamorphic principles (linear perspective).

Volumetric x-ray Visualisations for OSTAR Devices

Seeing through objects is a desirable trait not just for superheroes trying to escape from a burning building or locate a villain, but for any professional who needs to consider what they cannot yet see. This can be related to a construction worker who needs to know what is going on the floor above them or a surgeon who needs to know where an object is within a human body. Augmented reality x-ray vision can extend people's sight into areas beyond what is humanly possible. However, this functionality hasn't been well explored when using ocular see-through technology. Our goal is to take direct and unprocessed volume data and create X-ray visualizations that work using OSTAR devices. This will make them useful for people who need to interact with the real world but still need to be aware of their surroundings.

PERCOMIX: Perceiving distant collaborative activity with Mixed Reality

In many situations, we need to work together despite the distance. One of the ways to reduce this distance is to use a Virtual Collaborative Environment using immersive technologies (VR & AR). With this thesis work, we seek to improve the perception of collaborative activities in VCAs. A literature review on Collaboration and Mixed Reality revealed a limitation that was raised in 2002 which is still relevant today: we do not share all the perceptual information already available. We had therefore decided to study the display of available data, the relevance of each piece of information for collaboration in a virtual environment and how to go about it.